NotPetya: The Most Expensive Cyberattack of All Time

Table of Contents

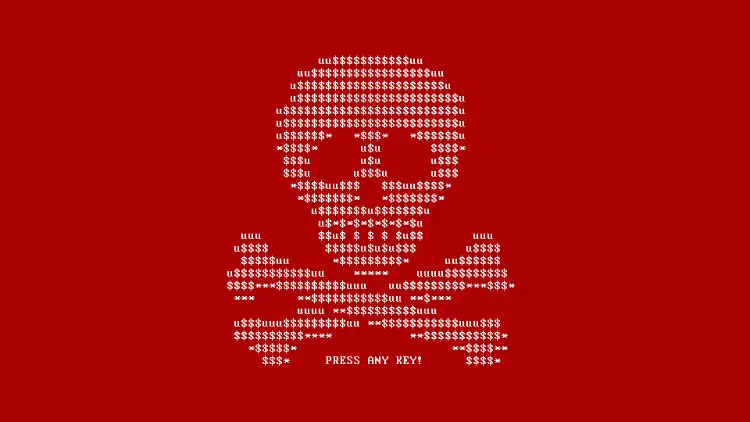

In late June 2017, something started in Ukraine that, at first glance, looked like “ransomware as usual”: machines reboot, files suddenly become unavailable, and a ransom note appears on screen.

Just a few hours later, it was clear: this was not a local incident. This was a wildfire.

The name that stuck is NotPetya. To this day, it is a textbook example of how a seemingly “simple malware wave” can turn into an event that cripples companies worldwide and causes damage in the billions.

In this post I want to connect two things:

- The story behind NotPetya: what happened back then, and why did it get so expensive?

- The practical perspective: what does this mean for “normal” companies today—not just Fortune 500 giants?

The uncomfortable part about NotPetya is not that it was “too complex”. The uncomfortable part is: it is frighteningly realistic.

Why am I writing this in 2026? Because today we may talk about AI-driven deepfake phishing attacks—but when things go sideways, we still fail at the same boring basics we did in 2017: missing segmentation and backups that are on the same lifeline as production.

NotPetya in 5 minutes: what happened?

To put this into context, we need to sort the sequence of events.

On June 27, 2017, NotPetya first showed up in Ukraine and then spread globally at high speed. A key factor was a compromised update of an accounting software package widely used in Ukraine (M.E.Doc). The malware did not come through “one dumb click”, but via a mechanism companies use every day: updates.

And that is something many people underestimate in hindsight: at its core, this was a supply-chain attack. M.E.Doc was not a random target—it was the perfect lever because updates are trusted by design.

The lesson for today is uncomfortable, but important: you can do a lot right internally—if software from your accountant, a vendor, an ERP connector, or a service provider gets corrupted, you are still in the blast radius. Supply-chain risk is not a “cloud problem”; it is very concretely an update problem.

Once NotPetya was inside a network, it stopped being about single endpoints and became about lateral movement:

- Via SMB and known vulnerabilities (including EternalBlue / MS17-010, an exploit leaked from the NSA toolset), NotPetya could spread to other Windows systems without user interaction.

- In parallel, it used credential dumping (Mimikatz/LSASS) to pull passwords/hashes out of RAM and move on with real admin credentials.

- For the actual remote execution, it used “normal” admin tooling (e.g., PsExec/WMI). That combination is so dangerous because it can look like “regular operations” in your logs at first.

That was the “secret sauce”: an exploit for fast hops to new systems, credentials pulled from memory for real privileges—and then scaling via built-in tools.

The important point: patching alone would not have been enough here. Even if a system was patched against EternalBlue, it could still fall once the malware had harvested valid admin credentials/hashes from somewhere else in the network.

NotPetya also played with time: it built in a delay and did not force the “big bang” (reboot/wipe) immediately, but only after a while. That is nasty for detection—and, as a side effect, a classic sandbox-evasion pattern, because the malware does not immediately “detonate” while it is already scaling in the background.

The end result is exactly what you always see in situations like this:

- First, a few systems start acting “weird”.

- Then an entire site suddenly tips over.

- And if you do not react extremely fast (disconnect networks, disable accounts, close segmentation boundaries), the incident can go multi-site.

Why “ransomware” was just camouflage

NotPetya was perceived as ransomware in many places because it displayed a ransom demand. Technically and practically, it was something else.

The key point: NotPetya was, in reality, a wiper. Malware whose purpose is not “make money”, but to destroy systems and disrupt operations.

That may sound like semantics. In an incident, it makes a huge difference:

- With classic ransomware, there is (sometimes) a chance to recover data via keys or negotiation.

- With a wiper, the goal is “broken”. Sending Bitcoin does not help you.

With NotPetya there is a technical detail that underlines the wiper character even more: it overwrote the MBR (Master Boot Record) and encrypted the MFT (Master File Table). And even the “ransomware” mechanics were implemented incorrectly: the displayed installation ID was randomly generated and not properly linked to a usable decryption key. In other words: there was essentially no technical way back.

That is exactly why NotPetya was so nasty: it pushed many companies into the wrong incident response playbook at first. You think “ransomware playbook”, but you actually need a “disaster recovery playbook”.

That the attack was more about destruction than money also fits later attribution: several governments publicly attributed NotPetya to Russian state actors.

10 billion dollars: why did it get so expensive?

If you only look at the “ransomware” label, the damage can be hard to explain at first. “So you just restore from backup, right?”

In practice, NotPetya was so expensive mainly because it hit companies where budgets tend to underinvest: availability.

The biggest cost block was not the malware itself, but downtime.

- Production lines stop.

- Logistics goes back to paper.

- Identities and domains need to be rebuilt.

- Applications, integrations, and dependencies start falling over one after another.

- And it does not happen in one system, but in many at the same time.

That is why NotPetya is often called “the most expensive cyberattack of all time”. Reports put total damages at around 10 billion US dollars.

You can also see it in individual company numbers: Merck, FedEx (including TNT Express), and Mondelez described in very concrete terms that this was not “an IT thing”—it was a business issue with real impact on revenue, costs, and delivery capability.

NotPetya also had a huge legal aftermath: companies fought with their insurers. Some insurers tried to deny coverage by classifying NotPetya as an “act of war” (i.e., a state-directed attack). A well-known example is Mondelez’ dispute with its insurer. For companies, this is still a hard lesson learned: does my cyber insurance cover state-backed attacks—or will a war exclusion kick in when it matters?

Pro-reader update: the Mondelez case was resolved in 2023. The market has tightened language in many places since then (and broadly after NotPetya): in many policies, “state-motivated” attacks are now defined more narrowly or explicitly excluded. That turns cyber insurance into a very real financial risk if you do not actually understand the wording.

What you should not underestimate: even if you “only” restore IT systems, you are fighting dependencies.

One example that shows up in many retrospectives is Maersk: this was not about a few encrypted files, but about rebuilding core systems, identities, and operational IT so that ports and logistics could even restart.

The story behind it is legendary because it perfectly shows how fragile identities and backups can be in practice: Maersk essentially lost its entire Active Directory—except for a single domain controller in Ghana that happened to be offline at the time of the attack due to a power outage. That “lucky punch” became an accidental offline backup: with that one physical server, they could reconstruct their global AD and accelerate recovery.

In the end, this does not just hit “IT”, but the business directly:

- For a food company: production, planning, and supply chains stop working.

- For a shipping company: containers do not move.

- For a pharma company: manufacturing, quality processes, and delivery capability come under pressure.

And that is why the number is so high: the ransom screen is only the symptom. The real damage is downtime.

A practical scenario: the incident I would expect at a “normal” company

I want to describe a case that intentionally does not feel like Hollywood. Not “state actor vs. big tech”, but a scenario I consider plausible for a typical mid-sized company.

Imagine a mid-sized company:

- One main site, a small second site.

- A classic Windows setup: AD, file servers, a few VMs, an ERP system.

- Plus a handful of systems you avoid touching for good reasons: machine controllers, old niche software, a “Windows Server 2008 something”, because the vendor never caught up.

- Backups exist: daily to a NAS, weekly additionally to a second system.

And here is the point many underestimate:

This company does not have “too little security” because everyone is incompetent. It is because operations and time always win.

Patching gets postponed because production planning is tight. Segmentation gets pushed to “later” because VLANs are work. Local admin passwords grew historically. And of course the NAS is online, because restores would otherwise be too annoying.

The timeline (realistic, not dramatic)

On a Tuesday morning, an update comes in. It could be a supplier, a service-provider tool, a connector—anything that auto-updates. Or it is a compromised system at a partner who has VPN access.

- 08:10: first clients become unstable. A reboot here, a weird error there.

- 08:25: the first file server feels slow. Helpdesk tickets spike.

- 08:40: suddenly “the domain is weird”. Logins take forever, group policy behaves strangely.

- 09:00: an admin logs in “just to take a quick look”. That is often the moment lateral movement really accelerates.

- 09:20: an entire site is effectively offline.

And now comes the part you only truly understand in hindsight:

If you do not hard-disconnect immediately in that moment, damage does not scale linearly—it scales exponentially.

And of course the question that always comes next is:

“Do we have to pay?”

With NotPetya, the bitter answer was: even if you wanted to, it most likely would not have been a solution. Psychologically, that changes the incident a lot, because you have to shift your mindset immediately to “rebuild”, not “decrypt”.

The longer you wait, the more systems must be rebuilt. The more systems must be rebuilt, the longer you operate in emergency mode. And the longer you operate in emergency mode, the more expensive it gets.

What actually hurts

After a few hours it becomes clear: this is not “we restore a few files”. This is “we rebuild Active Directory and core systems”.

And then you realize:

- Your backups were online and got hit too.

- Your documentation is not up to date.

- You do not have enough “golden images” to reimage quickly.

- Admin credentials were everywhere.

- Nobody has ever practiced how to segment a site cleanly in 30 minutes.

That is the moment when an IT incident turns into a company crisis.

What a SOC does differently today (and what AI has to do with it)

In a large Security Operations Center (SOC), billions of events from many sources flow in every day. This is no longer “an admin looking at the firewall log”, but an industrialized process of telemetry, correlation, automation, and human analysis.

What I find interesting is the split of roles:

- AI/automation as first line: it sorts, correlates, detects patterns, and filters the obvious.

- Humans as second/third line: when a case is critical or unclear, analysts take over, decide measures, and coordinate response.

That sounds like “only for enterprises”, but the lesson matters for small companies too:

You do not have to build a SOC yourself. But you need the same mindset:

- Collect signals (EDR, firewall, auth logs).

- Define what “normal” looks like.

- And have a process that reacts in minutes, not days.

NotPetya was already so fast in 2017 that no human could “react quickly enough” once the spread was in motion. The point of AI is therefore less “even faster”, and more: it helps you find the anomaly in the noise earlier (e.g., when at 03:00 suddenly 500 machines want to talk SMB to each other). Those minutes are what decide the outcome: see earlier, contain earlier, isolate earlier.

And then there is the mindset that I increasingly see as the default in modern security concepts:

Assume breach.

Plan as if the attacker is already inside. Not out of paranoia, but out of pragmatism.

What I want to see as a minimum today (without an enterprise budget)

If you take one thing from NotPetya, take this:

NotPetya was not “one vulnerability”. NotPetya was a chain of weaknesses amplifying each other.

The good news: that is exactly why a few basics can remove a lot of risk.

1) Patch and exposure management (actually take it seriously)

- Critical Windows updates (especially around SMB) cannot be “sometime later”.

- Anything reachable from the outside gets priority.

- And if you need legacy: at least segment it, with clear rules.

2) Make lateral movement painful

- Separate admin accounts (day-to-day vs. admin).

- Use unique local admin passwords per device (LAPS).

- Do not leave remote admin tools (WMI, PsExec) wide open everywhere.

- Restrict SMB wherever it is not truly needed.

3) Segmentation, but pragmatically

You do not have to implement “perfect” zero trust immediately. But:

- Clients, servers, backups, and OT/production do not belong in one flat network.

- Domain controllers are tier 0 and must be protected accordingly.

- East-west traffic deserves as much attention as internet traffic.

4) Backups: offline, immutable, tested

I will say it the way I keep seeing it in projects:

A backup that is always writable and always online is often not a backup when it matters.

- 3-2-1 is a good start.

- Immutable storage (or offline media) is a game changer.

- Restore tests are mandatory. Not “we have backups”, but “we practiced restores”.

5) Incident runbooks and a “kill switch” for sites

You do not need an 80-page handbook. But you do need clarity:

- Who decides a site gets disconnected?

- How do you disable accounts fast?

- Which systems must come back first (AD, DNS, DHCP, VPN, ERP)?

And yes: you have to practice this, or it will not help you during an incident.

Reality check: with NotPetya, the most effective measure in many cases was not “another tool”, but physical separation. There are reports that at Maersk, people literally ran through offices and pulled power/network cables from switches to stop the spread. It sounds basic—but sometimes it is the best high-tech security when seconds count.

Conclusion

To this day, NotPetya is one of the best examples of why IT security is not solved by “we have antivirus”.

The attack was not so devastating because it was magical. It was devastating because it hit very normal weak points:

- trust in updates

- too much lateral movement

- too little segmentation

- backups that are too close to the production network

And that is exactly why it is so relevant for “normal” companies.

If you invest today, do not just invest in tools—invest in resilience: visibility, fast response, and the ability to restore systems cleanly. That is the difference between “a very bad day” and “a quarter you will never catch up on”.

Sources and further reading

- CISA: Petya Ransomware (incl. NotPetya) (TA17-181A)

- U.S. DHS Blog (Archive): The NotPetya Ransomware Attack

- WIRED: The Untold Story of NotPetya, the Most Devastating Cyberattack in History

- UK Government: Foreign Office Minister condemns Russian govt cyber attack (NotPetya)

- U.S. DOJ: Six Russian intelligence officers charged (GRU) incl. NotPetya

- Merck: Form 10-K (2017) (SEC EDGAR)

- FedEx: Quarterly results (2017) mentioning TNT/NotPetya impacts

- Mondelez International: 2017 Fourth Quarter and Full Year Results (NotPetya impact)

Until next time, Joe